May 1, 2026

- AI Technology

- 3D Visualization

Why 2D to 3D Floor Plans Are So Hard | VirtualSpaces

Hemanth Velury

CEO & Co-FounderWhy 2D to 3D Floor Plans Are So Hard

On paper, 2D to 3D looks simple: read the drawing, extrude the walls, drop in furniture, render.

In practice, it is one of the great unsolved problems of computer vision because floor plans compress complex 3D intent into a dense, lossy, highly stylized 2D language.

A typical residential floor plan mixes:

- Ultra-thin lines for walls, thicker lines for structure, and broken lines for openings.

- Dozens of domain-specific symbols for doors, windows, stairs, plumbing, and electrical.

- Crowded text at odd angles for room labels, dimensions, and material notes, often overlapping the graphics.

Humans learn this visual code over years of studio work.

Off-the-shelf computer vision models see it as noisy, cluttered clip art and often fail at the basics: which lines are walls, which rectangles are furniture, which number is the scale, and which label belongs to which room.

What Existing Research Has Achieved in 2D to 3D

Academic work has pushed the field forward, but each approach tends to solve only part of what architects and interior designers need.

Several studies reconstruct 3D models directly from 2D floor plans or CAD drawings.

One method parses CAD floor plans into components, restores wall integrity, subdivides space into polygons, then reconstructs a 3D indoor model for smart city applications. An earlier vector-based approach extrudes outer loops, cuts inner openings, and constructs door and window models to get a clean 3D shell.

More recent work applies deep learning:

- One pipeline uses image processing, feature extraction, and augmented reality to turn smartphone scans of floor plans into navigable 3D models.

- Another reconstructs full 3D residential layouts from a single floor plan by using deep networks for scale estimation, wall and opening segmentation, and skeleton curve analysis.

- A separate project builds a CNN-driven system that segments walls, doors, and windows, forms a layout graph, predicts heights, and generates an editable 3D mesh.

Research is also starting to combine floor plans with photos.

Cornell's C3Po model aligns real interior photos with floor plans using a large paired dataset and reduces pixel-to-plan correspondence errors by about one third versus prior methods.

These systems prove that 2D to 3D is solvable in controlled settings, yet most are prototypes, limited by strict input formats and narrow deployment contexts. They rarely deal with the messy, heterogeneous, low-quality plans that architects and residential developers handle every day.

Snapshot of research approaches:

| Approach | Typical Input | Strengths | Practical Limitations for Homes |

|---|---|---|---|

| Classical CAD extrusion | Clean vector CAD floor plans | Precise geometry, good for BIM pipelines | Assumes perfect CAD, struggles with scans, limited semantics for interior design decisions |

| Image processing plus AR | Smartphone photo of printed blueprint | Low hardware requirements, interactive AR view | Sensitive to noise, difficult to map all symbols and text correctly on real projects |

| Deep learning segmentation plus reconstruction | Raster floor plan image | Learns to recognize walls, doors, windows, and infer height and layout automatically | Needs curated datasets and often expects standardized legends and drawing styles |

| Floor plan plus photos (C3Po) | Floor plan plus interior photos | Better texture realism, connects lived space to plan | Complex pipelines, heavy data requirements, still early for everyday interior workflows |

The direction is clear: 2D to 3D is moving from handcrafted rules to learned systems.

What is still missing is a production-grade engine that treats floor plans as their own specialized visual language and uses that to deliver real-time 3D visualization for residential design and sales.

Floor Plans as a Specialized Visual Language

The key shift at VirtualSpaces is treating floor plans as a specialized visual language rather than just images with text.

In linguistic terms, the symbols, line weights, hatch patterns, and annotations form a structured grammar that encodes how people design homes and how families live in them.

Our engine reads that grammar in several layers:

- Geometry layer: walls, doors, windows, structural openings, and the basic topology of rooms and circulation.

- Semantic layer: room types, labels, dimensions, orientation, adjacency, and connectivity.

- Intent layer: likely furniture zones, focal walls, daylight directions, privacy gradients, and usable wall lengths.

This is exactly where AI, OCR, and computer vision meet: 2D to 3D is not just a geometry problem, it is a language problem.

By training models on thousands of real residential 2D floor plans, the system learns that a narrow room labeled "Utility" behaves differently from a similarly sized "Study", and that designing spaces for people means prioritizing circulation, sightlines, and natural light, not just fitting boxes together.

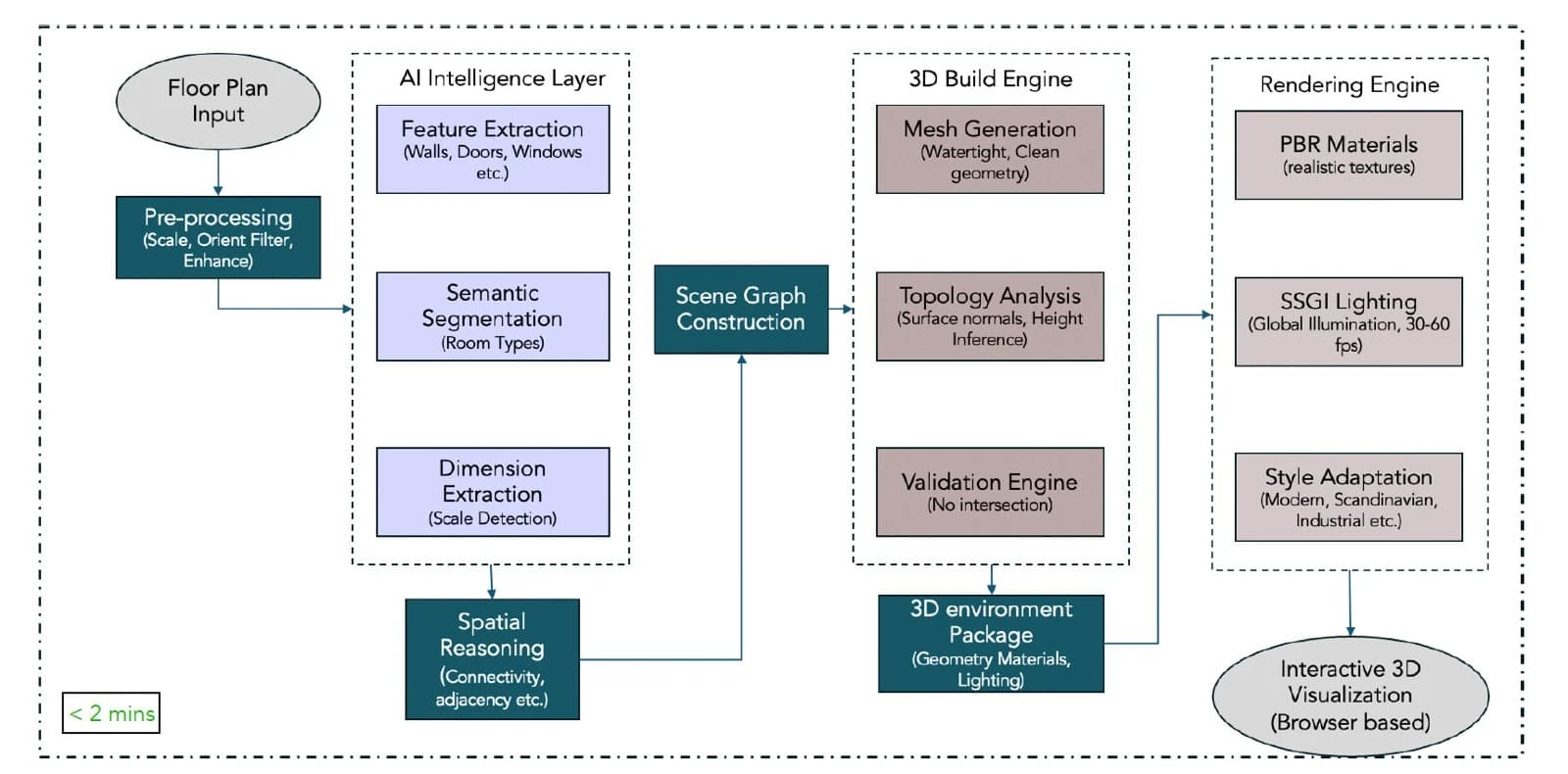

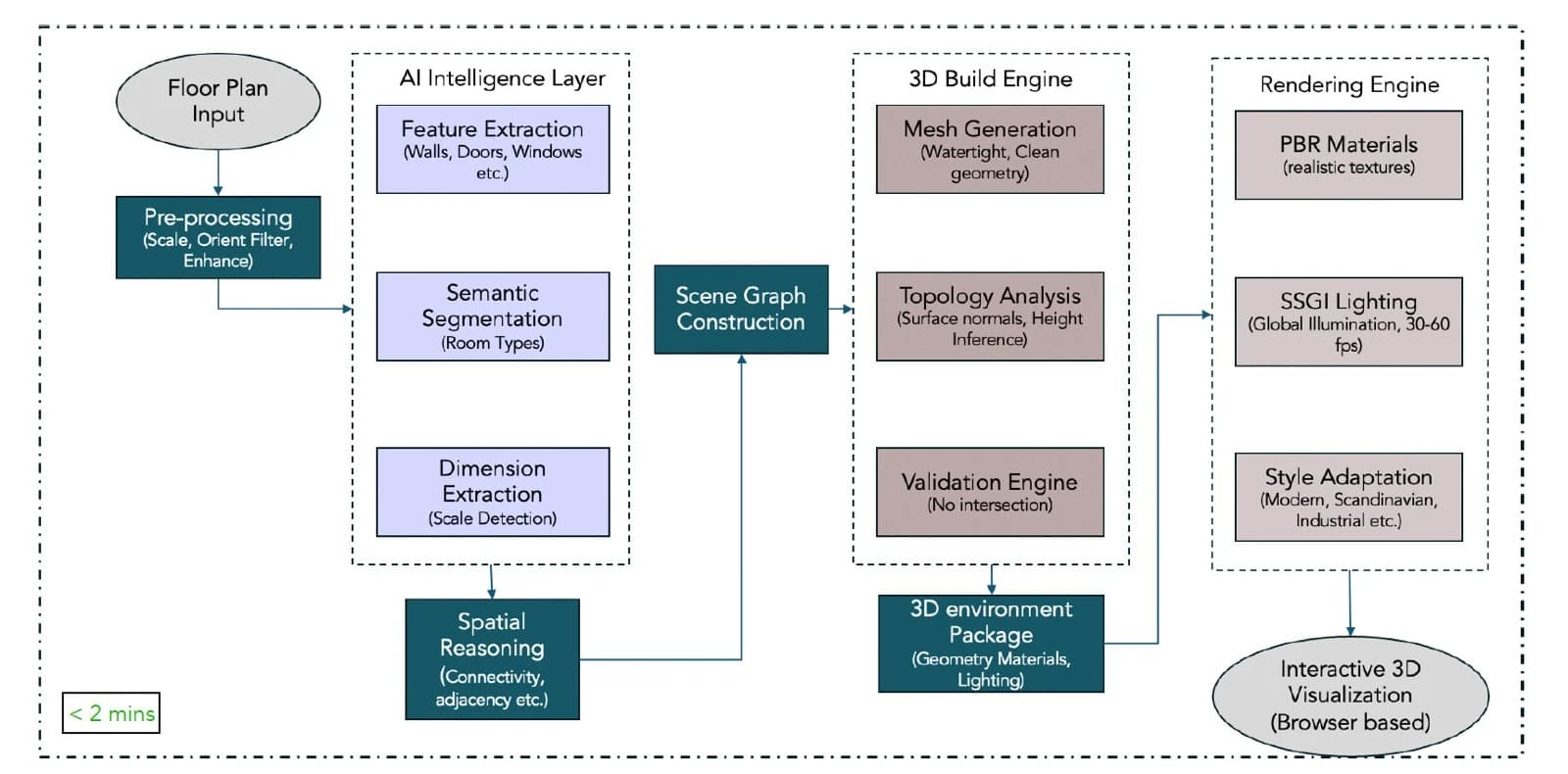

Inside the VirtualSpaces 2D to 3D Pipeline

The internal pipeline behind VirtualSpaces mirrors, but extends, the research literature.

A typical 2D floor plan passes through:

- Pre-processing: Scale and orientation are detected, noise is reduced, and linework is cleaned. This is crucial because in the wild, floor plans arrive as scans, phone photos, or exported PDFs with wildly different resolutions.

- AI intelligence layer:

- Feature extraction isolates walls, doors, windows, and structural elements.

- Semantic segmentation detects room types and legends, similar to how academic models segment floor plans but tuned for residential layouts.

- Dimension extraction infers scale and critical measurements.

- Spatial reasoning learns adjacency and connectivity, so circulation paths and lines of sight make sense.

- Scene graph construction: All these entities are assembled into a structured scene graph that represents a home rather than a collection of polygons. This graph is the backbone for 3D visualization and downstream AI interior design.

- 3D build engine:

- Mesh generation creates watertight geometry for each room and structural element.

- Topology analysis infers heights, floor level changes, and surface normals.

- Validation ensures that doors are not colliding with walls, openings are consistent, and no geometry is impossible.

- Rendering engine: Physically based materials, Screen Space Global Illumination (SSGI) lighting, and style adaptation translate the structural model into photoreal interior design renders that run in a standard browser. The same engine powers AI interior decor, AI 3D visualization, and AI virtual staging.

This entire flow, from floor plan input to interactive 3D environment, runs in minutes instead of the days or weeks that external 3D artists often require. It allows practitioners to convert floor plan to 3D on demand and iterate directly with clients.

Product Momentum: From 3D Shells to Lived-In Spaces

The roadmap around this engine is focused on designing spaces for people, not just generating meshes.

Key capabilities in active development include:

- White modeling plus AI furnishing: Designers place coarse furniture placeholders, and the AI generates full interior design photoreal renders with detailed materials and decor styles in real time.

- Photorealistic graphics pipeline: Physically based rendering (PBR) materials and Screen Space Global Illumination (SSGI) lighting deliver high-quality interior design 3D visualization inside a browser with no dedicated GPU.

- Dynamic floor plan editor: Parametric controls allow quick changes to walls and openings, with instant structural validation.

- First-person and orbital views: Stakeholders can move between technical axonometric views and immersive walkthroughs, which mirrors approaches used in gaming engines and NeRF-based 3D reconstruction.

- Real-time shareable links: Every design state is a URL that architects, agents, and home buyers can explore without software hand-offs.

The result is a platform where 2D to 3D computer vision AI is not a demo, but the substrate for collaboration between professionals and homeowners.

How This Helps Architects and Interior Designers

For architects and interior designers who design homes every day, the gains are practical, not abstract.

- Faster pre-sales and approvals: Teams can upload 2D floor plans, run blueprint to 3D in minutes, and present multiple layout options or AI virtual staging concepts without outsourcing to render studios. Home buyers understand volume, light, and furniture fit much earlier, and decisions come faster.

- Reduced software hand-offs: Instead of bouncing between CAD, BIM, DCC tools, and ad hoc rendering services, the same pipeline handles floor plan to 3D, AI interior design, and AI interior decor inside one experience. This matters for residential developers running many unit variants at once.

- Augmented creative output: Designers are still in control of intent and taste. The system handles heavy lifting: extracting structure, keeping materials consistent, testing multiple styles, and generating interior design renders so humans can focus on designing spaces, designing homes, and designing for people.

- Clearer communication with homeowners: Photoreal AI 3D visualization, with first-person walkthroughs and shareable links, lets non-technical clients react to the actual feel of a space rather than abstract drawings.

Qualitative ROI for Residential Workflows

| Area | Traditional Workflow | With Floor Plan to 3D AI Engine | ROI Signal |

|---|---|---|---|

| Concept design | Manual CAD, hand sketches, outsourced renders | Floor plan to 3D plus AI interior design in a browser session | Higher iteration speed, more concepts explored |

| Client approvals | 2D drawings, static PDFs | Interactive AI 3D visualization, virtual staging, and style variations | Shorter decision cycles, fewer misunderstandings |

| Outsourcing spend | Repeated external render contracts | In-house use of Foursite and similar tools | Lower variable cost per project, better reuse of assets |

| Sales and marketing | Generic brochures | Photoreal experiences tailored to target buyer personas | Higher perceived quality and engagement |

Even without inserting hard numbers, most teams can map these qualitative gains directly to their own hourly rates and project timelines.

Foursite and Remodroom: Two Sides of the Same Engine

Foursite is the manifestation of this engine for floor plan to 3D at the project scale.

It takes 2D floor plans and architectural blueprints from residential developers and architects, runs them through the specialized parser, and generates interactive 3D visualization for full homes and towers.

Remodroom uses the same ideas at the room scale.

Instead of parsing a technical drawing, it reads a single room photo, uses AI to understand structure, light, and materials, then synthesizes photoreal alternatives in different styles.

For interior designers and homeowners this means:

- Testing layouts and finishes from floor plans with Foursite in early design phases.

- Using Remodroom later to fine-tune bedrooms, living rooms, and kitchens based on real photos.

- Keeping design intent coherent across 2D floor plans, 3D models, and lived-in snapshots.

The shared DNA is important.

A specialized visual language engine that understands homes makes it possible to keep geometry, materials, and style decisions consistent between planning, marketing, and renovation.

Beyond Architecture: A General Technical Document Parser

Once you view 2D to 3D for homes as technical document understanding plus spatial reasoning, it becomes clear that architecture is a starting point, not a ceiling.

Research on floor plan recognition already sits close to similar work on engineering drawings, facility management plans, and circuit diagrams:

- Surveys of deep learning for floor plan recognition emphasize multimodal models that combine layout, symbols, and OCR, which parallels approaches for mechanical and electrical schematics.

- WAFFLE, a large multimodal floorplan dataset, uses detection models to separate legends, scales, and other UI elements, patterns that are shared across technical diagrams.

- Industry I-OCR systems target engineering drawings in general and explicitly mention the need to read technical symbols, tables, and annotations, not just text.

A technical document parser that has learned the specialized visual language of residential architecture can, with retraining, be extended to:

- Structural engineering drawings: recognizing rebar layouts, slab edges, and load paths, then validating that proposed interior changes stay within structural limits.

- Mechanical, engineering, and plumbing (MEP) plans: parsing HVAC, plumbing, and electrical routes to ensure AI virtual staging respects service zones and clearances.

- Circuit schematics: understanding components and connectivity, then generating 3D panel or device layouts that match manufacturing constraints.

The core ingredients are the same: domain-specific OCR, symbol recognition, topology extraction, and a scene graph that knows the difference between lines on a page and a system that must work in the real world.

Why This Space Is Still Underserved

Despite the research momentum and isolated consumer tools that promise "upload a blueprint and get a 3D model", most professional residential teams still rely on manual CAD, offline render studios, and fragmented workflows.

The reasons are straightforward:

- Few systems handle the breadth of real-world plan formats and annotation styles that appear across regions and decades.

- Generic OCR and computer vision stacks cannot reach the precision needed for permitting, sales, and construction drawings.

- Many academic systems stop at clean 3D shells instead of delivering the AI interior design, AI visualization, and AI 3D visualization that stakeholders actually need.

By treating floor plans as a specialized visual language, building a robust technical document parser, and tying it directly to photoreal AI interior decor and virtual staging workflows, VirtualSpaces is trying to close that gap.

For technologists, gaming engine developers, residential real estate developers, architects, interior designers, and homeowners, that shift turns 2D to 3D from an offline service into a core capability that lives at the heart of how we design spaces for people.